Introduction

Viper is a verification infrastructure that simplifies the development of program verifiers and facilitates rapid prototyping of verification techniques and tools. In contrast to similar infrastructures such as Boogie and Why3, Viper has strong support for permission logics such as separation logic and implicit dynamic frames. It supports permissions natively and uses them to express ownership of heap locations, which is useful to reason about heap-manipulating programs and thread interactions in concurrent software.

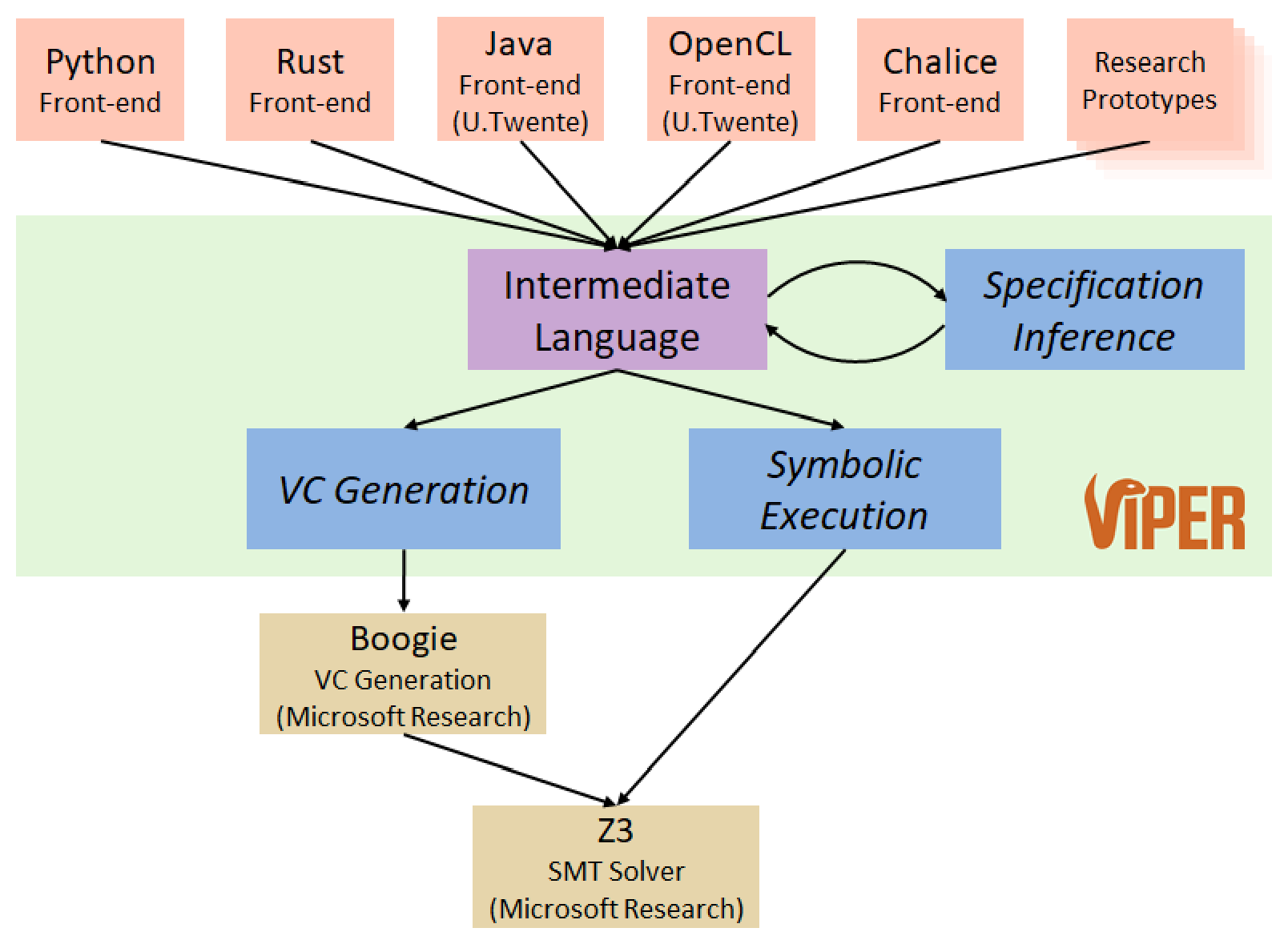

The Viper infrastructure, shown above, provides an intermediate language as well as two verification back-ends, one based on symbolic execution and one based on verification condition generation. Both back-ends ultimately use the SMT solver Z3 to discharge proof obligations. Front-end tools translate different source languages and verification approaches into the Viper language and thereby benefit from its tool support and automation.

The Viper intermediate language is a simple sequential, object-based, imperative programming language. Even though it has been designed as an intermediate language, the Viper language supports both high-level features that are convenient to express verification problems manually as well as powerful low-level features that are useful to encode source languages and verification techniques automatically.

The following simple example shows a method that computes the sum of the first n positive natural numbers. The method declaration includes a precondition (the assertion following requires) to restrict the argument to non-negative values. This expresses the fact that method sum can only be assumed to behave correctly (in particular, do not crash) for non-negative values. The method declaration also includes a postcondition (the assertion following ensures) to guarantee properties of the result variable res. The postcondition is the only information that a caller of the method sum can use to correlate the call result and argument. In particular, in absence of post-condition a caller of method sum cannot make use of the fact that the result is non-negative. This would be required, for example, in order to be able to call sum on its own result. Method preconditions and postconditions together make up a method’s specification.

Viper verifies partial correctness of program statements; that is, verification guarantees that if a program state is reached, then the properties specified at that program state are guaranteed to hold. For example, the postcondition of sum is guaranteed to hold whenever a call to sum terminates. Verification of loops also requires specification: the loop in sum‘s body needs a loop invariant (if omitted, the default loop invariant is true, which is typically not strong enough to prove interesting properties of the program). The loop invariant in sum could also be written in one line with the boolean operator && placed between the two assertions.

method sum(n: Int) returns (res: Int)

requires 0 <= n

ensures res == n * (n + 1) / 2

{

res := 0

var i: Int := 0;

while(i <= n)

invariant i <= (n + 1)

invariant res == (i - 1) * i / 2

{

res := res + i

i := i + 1

}

}

- This tutorial features runnable examples, which use the Viper verifiers. You can run the example by hitting the “play” button - it should verify without errors.

- You can also edit the examples freely, and try out your own versions. Try commenting the

requiresline (the method precondition) - this should result in a verification error. Viper supports both//and/* ... */styles for comments. - Try implementing a recursive version of the

summethod. Note that Viper does not allow method calls within compound expressions; a call tosummust have the formx := sum(e)for some variablexand expressione, and not, e.gx := sum(e) + 42. Does the same specification work also for your recursive implementation? - Try implementing client code which calls the

summethod in order to computes the sum of the first 5 natural numbers. Provide a suitable postcondition.

This tutorial gives an overview of the features of the Viper language and explains their syntax and semantics. We provide examples and exercises throughout, to illustrate the basic usage of these features. We encourage readers to experiment with the examples and often suggest variations of the presented examples to try out. The tutorial does not aim to explain the workings of the Viper verifiers in general, nor the advanced usage of Viper’s language features for building custom verification tools: for these topics, we refer interested readers to our Viper-related research papers.

If you have comments, questions or feedback about Viper, including this tutorial, we would be happy to receive them! Please send your emails to viper@inf.ethz.ch

Structure of Viper Programs

For a type safe Viper program to be correct, all methods and functions in it must be successfully verified against their specifications. The implementation of a Viper method consists of certain imperative building blocks (such as branch conditions, loops, etc.) whereas the specification consists of assertions (contracts, loop invariants, etc.). Statements (or operations) may change the program state, whereas assertions cannot. In contrast, assertions can talk about properties of a particular state — so they can be used to specify the behavior of operations. What the implementation and the specification have in common is that they both make use of expressions. For all of these building blocks, Viper supports different means of abstraction.

Methods can be viewed as a means of abstraction over a sequence of operations (i.e. the execution of a potentially-unbounded number of statements). The caller of a method observes its behavior exclusively through the method’s signature and its specification (its preconditions and postconditions). Viper method calls are treated modularly: for the caller of a method, the method’s implementation can be thought of as invisible. Calling a method may result in modifications to the program state, therefore method calls cannot be used in specifications. On the one hand, the caller of a method must first satisfy the assertions in its precondition in order to obtain the assertions from its postcondition. On the other hand, in order to verify a method itself, Viper must prove that the method’s implementation adheres to the method’s specification.

Functions can be viewed as a means of abstraction over (potentially state-dependent) expressions. Generally, the caller of a function observes three things. First, the precondition of the function is checked in the state in which the function is called. The precondition may include assertions denoting resources, such as permissions to field locations that the the function may read from. Second, the function application’s result value is equated with the expression in the function’s body (if provided); note that this usage of the function’s implementation is a difference from the handling of methods. Third, the function’s postconditions are assumed. The postcondition of a function may not contain resource assertions (e.g. denoting field permissions): all resources from the precondition are automatically returned after the function application. Refer to the section on functions for more details.

Predicates can be viewed as a means of abstraction over assertions (including resources such as field permissions). The body of a predicate is an assertion. Unlike functions, predicates are not automatically inlined: to replace a predicate with its body, Viper provides an explicit unfold statement. An unfold is an operation that changes the program state, replacing the predicate resource with the assertions specified by its body. The dual operation is called a fold: folding a predicate replaces the resources specified by its body with an instance of the predicate itself. Refer to the section on predicates for more details.

Below you can find a brief description and examples of the language constructs that can be used to write a Viper program. Note that the order in which top-level declarations are written is not important, as names are resolved against all declarations of the program (including later declarations).

Language overview

Top-level declarations

Fields

field val: Int

field next: Ref

- Declared by keyword

field - Every object has all fields (there are no classes in Viper)

- See the permission section for examples

Methods

method QSort(xs: Seq[Ref]) returns (ys: Seq[Ref])

requires ... // precondition

ensures ... // postcondition

{

// body (optional)

}

- Declared by keyword

method - Have input and output parameters (e.g.,

xsandys) - Method calls can modify the program state; see the section on statements for details

- Hence calls cannot be used in specifications

- Modular verification of methods and method calls

- The body may include some number of statements (including recursive calls)

- The body is invisible at call site (changing the body does not affect client code)

- The precondition is checked before the method call (more precisely, it is exhaled)

- The postcondition is assumed after the method call (more precisely, it is inhaled)

- See the permission section for more details and examples

Functions

function gte(x: Ref, a: Int): Int

requires ... // precondition

ensures ... // postcondition

{

... // body (optional)

}

- Declared by keyword

function - Have input parameters (e.g.,

xanda) and one output return value - The keyword

resultmay be used in a function’s postcondition to refer to the return value - Function applications may read but not modify the program state

- Function applications can be used in specifications

- Permissions (and resource assertions in general) may be mentioned in a function’s precondition, but not in its postcondition

- If a function has a body, the body is a single expression (possibly including recursive calls to functions)

- Unlike methods, function applications are not handled modularly (for functions with bodies): changing the body of a function affects client code

- See the section on functions for details

Predicates

predicate list(this: Ref) {

... // body (optional)

}

- Declared by keyword

predicate - Have input parameters (e.g.,

head) - Typically used to abstract over assertions and to specify the shape of recursive data structures

- Predicate instances (e.g.

list(x)) are resource assertions in Viper - See the section on predicates for details

Domains

domain Pair[A, B] {

function getFirst(p: Pair[A, B]): A

// other functions

axiom ax_1 {

... // axiom body

}

// other axioms

}

- Declared by keyword

domain - Have a name, which is introduced as an additional type in the Viper program

- Domains may have type parameters (e.g.

AandBabove) - A domain’s body (delimited by braces) consists of a number function declarations, followed by a number of axioms

- Domain functions (functions declared inside a

domain) may have neither a body nor a specification; these are uninterpreted total mathematical functions - Domain axioms consist of name (following the

axiomkeyword), and a definition enclosed within braces (which is a boolean expression which may not read the program state in any way)

- Domain functions (functions declared inside a

- Useful for specifying custom mathematical theories

- See the section on domains for details

Macros

define plus(a, b) (a+b)

define link(x, y) {

assert x.next == null

x.next := y

}

- Declared by keyword

define - C-style, syntactically expanded macros

- Macros are not type-checked until after expansion

- However, macro bodies must be well-formed assertions / statements

- May have any number of (untyped) parameter names (e.g.

aandbabove) - The are two kinds of macros

- Macros defining assertions (or expressions) include the macro definition whitespace-separated afterwards (e.g.

plusabove) - Macros defining statements include their definitions within braces (e.g.

linkabove)

- Macros defining assertions (or expressions) include the macro definition whitespace-separated afterwards (e.g.

- See the array domain encoding for an example

Built-in types

Reffor references (to objects, except for the built-inRefconstantnull)Boolfor Boolean valuesIntfor mathematical (unbounded) integersRationalfor mathematical (unbounded) rationals (this type is expected to be deprecated in the summer 2023 release)Permfor permission amounts (see the section on permissions for details)Seq[T]for immutable sequences with element typeTSet[T]for immutable sets with element typeTMultiset[T]for immutable multisets with element typeTMap[T, V]for immutable maps with key typeTand value typeV- Additional types can be defined via domains

Imports

Local Import:

import "path/to/local.vpr"

Standard Import:

import <path/to/provided.vpr>

Imports provide a simple mechanism for splitting a Viper program across several source files using the local import. Further, it also makes it possible to make use of programs provided by Viper using the standard import.

- A relative or absolute path to a Viper file may be used (according to the Java/Unix style)

importadds the imported program as a preamble to the current one- Transitive imports are supported and resolved via depth-first traversal of the import graph

- The depth-first traversal mechanism enforces that each Viper file is imported at most once, including in the cases of multiple (indirect) imports of the same file or of recursive imports.

Permissions

Introduction

Reasoning about the heap of a Viper program is governed by field permissions, which specify the heap locations that a statement, an expression or an assertion may access (read and/or modify).

Heap locations can be accessed only if the corresponding permission is held by the currently verified method. The simplest form of field permission is the exclusive permission to a heap location x.f; it expresses that the current method may read and write to the location, whereas other methods or threads are not allowed to access it in any way.

Every Viper operation, i.e., every statement, expression, or assertion, has an implicit or explicit specification expressing which field permissions the operation requires, i.e., which locations it accesses. The part of the heap denoted this way is called the footprint of an operation.

Permissions enable preserving information about heap values in Viper: as long as the footprint of an expression is disjoint from the footprint of a method call, it can be concluded that the call does not change the expression’s value, even if the concrete method implementation is unknown. Preserving properties this way is called framing: e.g. we might say that the value of an expression is framed across a method call, or in general, across a statement.

For example: the footprints of the expression x.f == 0 and the field assignment statement x.f := 1 are not disjoint, and the property x.f == 0 can therefore not be framed across the assignment. In contrast, the property could be framed across y.f := 0 if y and x were known to not be aliases. Permissions can also be used to guarantee non-aliasing, as will be discussed in more detail later.

In pre- and postconditions, and generally in assertions, permission to a field is denoted by an accessibility predicate: an assertion which is written acc(x.f). An accessibility predicate in a method’s precondition can be understood as an obligation for a caller to transfer the permission to the callee, thereby giving it up. Consequently, an accessibility predicate in a postcondition can be understood as a permission transfer in the opposite direction: from callee to caller.

A simple example is given next: a method inc that increments the field x.f by an arbitrary value i.

field f : Int

method inc(x: Ref, i: Int)

requires acc(x.f)

ensures true

{

x.f := x.f + i

}

The program above declares a single integer (Int) field f, and the aforementioned increment method. The reference type (Ref) is built in; values of this type (other than the special value null) represent objects in Viper, which are the possible receivers for field accesses. Method inc‘s specification (sometimes called its contract, in other languages) is expressed via its precondition (requires) and postcondition (ensures).

- You can run the example by hitting the “play” button - it should verify without errors.

- Comment out the

requiresline (the method precondition) and re-run the example - this will result in a verification error, since permission to accessx.fis no longer guaranteed to be held. - Implement an additional method that requires permission to

x.fin its precondition and callsinc(x, 0)twice in its body. Does the current specification ofincsuffice here? Can you guess what the problem is, and solve it?

In the remainder of this section we proceed as follows: After a brief description of Viper’s program state, we introduce the basic permission-related features employed by Viper. In particular, we discuss permission-related statements in the Viper language, as well as permission-related assertions, when such assertions are well-defined, and how they can be used to specify properties such as (non-)aliasing. Finally, we present a refined notion of permission supported by Viper: fractional permissions, which enable simultaneous access to the same heap location by multiple methods or (when modelling these in Viper) concurrent threads.

Viper’s Program State

Permissions are a part of a Viper program’s state, alongside the values of variables and heap locations. Fields are only the first of several kinds of resource that will be explained in this tutorial; access to each resource is governed by appropriate permissions. Different permissions can be held at different points in a Viper program: e.g., after allocating new memory on the heap, we would typically also add the permission to access those locations. In the next subsection, we will see the primitive operations Viper provides for manipulating the permissions currently held.

A program state in Viper consists of:

- The values of all variables in scope: these include local variables, method input parameters (which cannot be assigned to in Viper), and method return parameters (which can) of the current method execution. Verification in Viper is method-modular: each method implementation is verified in isolation and, thus, the program state does not contain an explicit call stack.

- The permissions to field locations held by the current method execution.

- The values of those field locations to which permissions are currently held. Other field locations cannot be accessed.

The initial state of each method execution contains arbitrary values (of the appropriate types) for all variables in scope, and no permissions to heap locations. Permissions can be obtained through a suitable precondition (as in the inc example above); preconditions can also constrain the values of parameters and heap locations. Field locations to which permission is newly obtained will also contain arbitrary values.

Inhaling and Exhaling

As previously mentioned, accessibility predicates in a method’s precondition, such as acc(x.f) in the precondition of inc, can be understood as specifying that permission to a field (here x.f) must be transferred from caller to callee.

The process of gaining permission (which happens in the callee), is called inhaling permissions; the opposite process of losing permission (in the caller) is called exhaling. Both operations thus update the amount of currently held permissions: from a caller’s perspective, permissions required by a precondition are removed before the call and permissions guaranteed by a postcondition are gained after the call returns. From a callee’s perspective, the opposite happens.

Similar permission transfers also happen at other points in a Viper program; most notably, when verifying loops: a loop invariant specifies the permissions transferred (1) from the enclosing context to the first loop iteration, (2) from one loop iteration to the next, and (3) from the last loop iteration back to the enclosing context. Inside a loop body, heap locations may only be accessed if the required permissions have been explicitly transferred from the surrounding context to the loop body via the loop invariant.

In addition to specifying which permissions to transfer, Viper assertions may also specify constraints on values, just like in traditional specification languages. For example, a precondition acc(x.f) && x.f > 0 requires permission to location x.f and that its value is positive. Note that the occurrence of x.f inside acc(x.f) denotes the location (in compiler parlance, x.f as an lvalue); the meaning of an accessibility predicate is independent of the value of x.f as an expression (as used, e.g., in x.f > 0).

Consider now the call in the first line of method client in the example below: set‘s precondition specifies that the permission to a.f is transferred from the caller to the callee, and that i must be greater than a.f. Thus, method client has to exhale the permission to a.f (which is inhaled by set) and the caller has to prove that a.f < i (which it currently cannot). Conversely, the postcondition causes the permission to be transferred back to the caller when set terminates, i.e., it is inhaled by method client, and the caller gains the knowledge that a.f == 5.

When verifying method set itself, the opposite happens: permission to x.f is inhaled before the method body is verified, alongside the fact that x.f < i. After the body has been verified, permission to x.f are exhaled and it is checked that x.f‘s value is indeed i.

field f: Int

method client(a: Ref)

requires acc(a.f)

{

set(a, 5)

a.f := 6

}

method set(x: Ref, i: Int)

requires acc(x.f) && x.f < i

ensures acc(x.f) && x.f == i

{

x.f := i

}

- Method

clientfails to verify: the precondition of the callset(a, 5)may not hold. Can you fix this (without modifying methodset)? - Afterwards, add the following call as the last statement to method

client:set(a, a.f). Verification will now fail again. Remedy the situation by slightly weakening methodset‘s precondition. - Finally, comment out the postcondition of method

set. Verification will fail again because methodclientdoes not have permission for the assignment toa.f. When a method call terminates, all remaining permissions that are not transferred back to its caller (via its postcondition) are leaked (lost).

Note that when encoding, e.g., a garbage-collected source language into Viper, the design choice that any excess permissions get leaked is convenient; it allows heap-based data to simply go out of scope and become unreachable. However, in the case of set in the example above, such leaking is presumably not the intention. Viper can also be used to check that certain permissions are not leaked; see the perm expression in the section on expressions for more details.

Inhale and Exhale Statements

To enable the encoding of programming language features that are not directly supported by Viper, such as forking and joining threads or acquiring and releasing locks, Viper allows one to explicitly exhale or inhale permissions via the statements exhale A and inhale A, where A is a Viper assertion such as method set‘s precondition acc(x.f) && i < x.f. From a caller’s perspective, set‘s pre- and postcondition can be seen as syntactic sugar for appropriate exhale and inhale statements before and after a call to the method.

The informal semantics of exhale A is as follows:

- Assert that all value constraints in

Ahold; if not, verification fails - Assert that all permissions denoted (via accessibility predicates) by

Aare currently held; if not, verification fails - Remove the permissions denoted by

A

The informal semantics of inhale A is as follows:

- Add the permissions denoted by

Ato the program state - Assume that all value constraints in

Ahold

As an example, consider the following Viper program (ignoring, for the moment, the commented-out lines):

field f: Int

method set_inex(x: Ref, i: Int) {

// x.f := i

inhale acc(x.f)

x.f := i

// exhale acc(x.f)

// x.f := i

}

Unlike the previous example, this method has no pre- and postcondition (no requires/ensures). This means that we start verification of the method body in a state with no permissions. The statement inhale acc(x.f) causes the corresponding permission to be added to the state, allowing the assignment on the following line to verify.

- Uncomment the first line of the method body. This will cause a verification error (on that line) since we try to access the location

x.fbefore inhaling the necessary permission. - Alternatively, uncomment the last two lines of the method body. This will cause a verification error for the last line, since we exhale the permission

x.fbefore accessing the heap location. - Uncomment the exhale statement and duplicate it, i.e., attempt to exhale permission to

x.ftwice. What happens?

Self-Framing Assertions

Some Viper expressions and assertions come with conditions under which they are well-defined: e.g., partial operations (such as division) must not be applied outside of their domains (such as 1/n if n is potentially zero).

Well-definedness conditions in Viper guarantee not only that assertions have a meaningful semantics, but that this semantics will be consistent across multiple contexts in which specifications are evaluated. Examples are Viper method specifications and loop invariants: preconditions (postconditions) are evaluated both at the beginning (end) of verifying the method body and before (after) each call to the method; loop invariants are evaluated before and after a loop, as well as at the beginning and end of the loop body.

Such assertions are therefore checked to be guaranteed well-defined in all states they can possibly be evaluated in.

As an example, the assertion n < i/j is not well-defined in general; it cannot be used in e.g. a method precondition unless that precondition also guarantees that the value of j cannot be 0. The assertion j > 0 && n < i/j is well-defined, since the first conjunct is well-defined by itself, and ensures the well-definedness of the second conjunct. In general, the (short-circuiting) order of evaluation of logical connectives is taken into account for well-definedness conditions. For example, j != 0 ==> n < i/j is also well-defined (the right hand side’s value is only used when the left hand side is true, which guarantees its well-definedness condition).

Well-definedness in Viper also requires that all heap locations read by the assertion are accessible, i.e., that the corresponding permissions are held. Again, this must be the case for all possible states in which the assertion could be evaluated.

To ensure this property, Viper requires specification assertions to be self-framing: i.e., each such assertion must include permission to at least the locations it reads. As an example, acc(x.f) and acc(x.f) && x.f > 0 are self-framing, whereas x.f > 0 and acc(x.f.g) are not: in the latter two cases, the meanings of the assertions depend on the value of the field x.f, to which permission is not included.

Viper checks well-definedness, and thus also self-framedness, according to a left-to-right evaluation order. The assertion acc(x.f) && 0 < x.f is therefore accepted as self-framing, but 0 < x.f && acc(x.f) is not. This restriction is typically easy to work around in practice.

The assertions in explicit inhale and exhale statements need not be self-framing because they are evaluated in only one program state; Viper will simply check that the well-definedness conditions for their assertions (e.g., that sufficient permissions are held) are true in that program state.

Exclusive Permissions

Permissions to field locations as described so far are exclusive; it is not possible to hold permission to a location more than once. This built-in principle can indirectly guarantee non-aliasing between references: inhaling the assertions acc(x.f) and acc(y.f) implies x != y because otherwise, the exclusive permission to acc(x.f) would be held twice. This is demonstrated by the following program:

field f: Int

method exclusivity(x: Ref, y: Ref) {

inhale acc(x.f)

inhale acc(y.f)

exhale x != y

}

- Comment one of the two inhale statements. Does the exhale statement still succeed?

- Add the third inhale statement

inhale x == yanywhere before the exhale statement and change the latter toexhale false. Can false be asserted? Why does this demonstrate that it is not possible to hold more than one exclusive permission tox.f?

In Viper, accessibility predicates can be conjoined via &&; the resulting assertion requires the sum of the permissions required by its two conjuncts. Therefore, the two statements inhale acc(x.f); inhale acc(y.f) (semicolons are required in Viper only if statements are on the same line) are equivalent to the single statement inhale acc(x.f) && acc(y.f). In both cases, the obtained permissions imply that x and y cannot be aliases.

Intuitively, the statement inhale acc(x.f) && acc(y.f) can be understood as inhaling permission to acc(x.f), and in addition to that, inhaling the permission to acc(y.f). Technically, this conjunction between resource assertions is strongly related to the separating conjunction from separation logic; formal details of the connection (and how to encode standard separation logic into Viper) can be found in this paper.

We can now see how exclusive permissions enable framing and modular verification, as illustrated by the next example below. Here, method client is able to frame the property b.f == 3 across the call to inc(a, 3) because holding permission to both a.f and b.f implies that a and b cannot be aliases, and because method inc‘s specification states that inc only requires the permission to a.f. Since permission to b.f is retained, the value of b.f can be framed across the method call. Informally, and thinking more operationally, the method would not be able to modify this field location, since it lacks the necessary permission to do so.

field f: Int

method inc(x: Ref, i: Int)

requires acc(x.f)

ensures acc(x.f)

ensures x.f == old(x.f) + i

{

x.f := x.f + i

}

method client(a: Ref, b: Ref) {

inhale acc(a.f) && acc(b.f)

a.f := 1

b.f := 3

inc(a, 3)

assert b.f == 3

}

Note:

- The expression

old(x.f)in methodinc‘s postcondition denotes the value thatx.fhad at the beginning of the method call. In general, an old expressionold(e)causes all heap-dependent subexpressions ofe(in particular, field accesses and calls to heap-dependent functions) to be evaluated in the initial state of the corresponding method call. Note that variables are not heap-dependent; their values are unaffected byold. - Method specifications can contain multiple

requiresandensuresclauses; this has the same meaning as if allrequiresassertions were conjoined, and likewise forensures.

- Add the statement

assert a.f == 4to the end of methodclient; it will verify. Comment the second postcondition ofincto make it fail. What happens if you comment the first (but not the second) postcondition? - Add a method

copyAndInc(x: Ref, y: Ref)with the implementationx.f := y.f + 1. Can you give it a specification such that, when invoked ascopyAndInc(a, b)by methodclientin place of the callinc(a, 3), the statementassert b.f == 3 && a.f == 4in methodclientverifies? - In method

client, change the invocation of methodcopyAndInctocopyAndInc(a, a), and change theassertstatement toassert b.f == 3 && a.f == 2. You’ll probably have to change the specifications of methodcopyAndIncto verify the new code.

Fractional Permissions

Exclusive permissions are too restrictive for some applications. For instance, it is typically safe for multiple threads of a source program to concurrently access the same heap location as long as all accesses are reads. That is, read access can safely be shared. However, if any thread potentially writes to a heap location, no other should typically be allowed to concurrently read it (otherwise, the program has a data race). To support encoding such scenarios, Viper also supports fractional permissions with a permission amount between 0 and 1. Any non-zero permission amount allows read access to the corresponding heap location, but only the exclusive permission (1) allows modifications.

The general form of an accessibility predicate for field permissions is acc(e.f, p), where e is a Ref-typed expression, f is a field name, and p denotes a permission amount. Permission amounts are denoted by write for exclusive permissions, none for zero permission, quotients of two Int-typed expressions i1/i2 to denote a fractional permission; any Perm-typed expression may be used here. Perm is the type of permission amounts, which is a built-in type that can be used like any other type. The permission amount parameter p is optional and defaults to write. For example, acc(e.f), acc(e.f, write) and acc(e.f, 1/1) all have the same meaning.

The next example illustrates the usage of fractional permissions to distinguish between read and write access: there, method copyAndInc requires write permission to x.f, but only read permission (we arbitrarily chose 1/2, but any non-zero fraction would suffice) to y.f.

field f: Int

method copyAndInc(x: Ref, y: Ref)

requires acc(x.f) && acc(y.f, 1/2)

ensures acc(x.f) && acc(y.f, 1/2)

{

x.f := y.f + 1

}

- Change the permission amount for

x.fto9/10, i.e., the corresponding accessibility predicates toacc(x.f, 9/10). Where does the code fail (and why)? - Alternatively, change the implementation to

y.f := x.f + 1. - Revert to the original example. Afterwards, change the permission amount for

y.ftonone(or0/1). Where does the code fail (and why)?

Fractional permissions to the same location are summed up: inhaling acc(x.f, p1) && acc(x.f, p2) is equivalent to inhaling acc(x.f, p1 + p2), and analogously for exhaling. As before, inhaling permissions maintains the invariant that write permission to a location are exclusive. With fractional permission in mind, this can be rephrased as maintaining the invariant that the permission amount to a location never exceeds 1.

To illustrate this, consider the next example (and its exercises): there, the assert statement fails because holding one third permission to each x.f and y.f does not imply that x and y cannot be aliases, since the sum of the individual permission amounts does not exceed 1.

field f: Int

method test(x: Ref, y: Ref) {

inhale acc(x.f, 1/2) && acc(y.f, 1/2)

assert x != y

}

- Change both permission amounts to

2/3. Theassertstatement will now verify. - Revert to the original example. Afterwards, replace the

assertstatement byinhale x == yand add the statementexhale acc(x.f, 1/1)to the end of methodtest. The code verifies, which illustrates that permission amounts are summed up. - Revert to the original example. Afterwards, replace the

assertstatement byexhale acc(x.f, 1/8) && acc(x.f, 1/8), and add the subsequent statementexhale acc(x.f, 1/4). The code verifies, which illustrates that permission amounts can be split up. - Revert to the original example. Afterwards, add a third argument

z: Refto the signature of methodtest, add the conjunctacc(z.f, 1/2)to theinhalestatement and change theassertstatement tox != y || x != z. This verifies , but neither disjunct on their own will. Why?

While fractional permissions no longer always guarantee non-aliasing between references, as demonstrated by the previous example, they still enable framing, e.g., across method calls: from a caller’s perspective, holding on to a non-zero permission amount to a location x.f across a method call guarantees that the value of x.f cannot be affected by the call. That is, because the callee would need to obtain write permission, i.e., permission amount 1, which cannot happen as long as the caller retains its fraction.

The next example illustrates the use of fractional permissions for framing: there, method client can frame the property b.f == 3 across the call to copyAndInc because client retains half the permission to b.f across the call. Note that the postcondition of copyAndInc does not explicitly state that the value of y.f remains unchanged.

field f: Int

method copyAndInc(x: Ref, y: Ref)

requires acc(x.f) && acc(y.f, 1/2)

ensures acc(x.f) && acc(y.f, 1/2)

ensures x.f == y.f + 1

{

x.f := y.f + 1

}

method client(a: Ref, b: Ref) {

inhale acc(a.f) && acc(b.f)

a.f := 1

b.f := 3

copyAndInc(a, b)

assert b.f == 3 && a.f == 4

}

- Add the statement

exhale acc(b.f, 1/2)right before the invocation ofcopyAndInc. Since methodclienttemporarily loses all permission tob.f, the propertyb.f == 3can no longer be framed across the call. Note that methodclientcannot deduce modularly (i.e., without considering the body of methodcopyAndInc) thatcopyAndIncdoes not modifyb.f; the method body might inhale the other half permission (e.g., modelling the acquisition of a lock) and thus, be able to assign tob.f. - Can you fix the new code by changing the specifications of method

copyAndInc? - Revert to the original example. Afterwards, modify method

clientas follows: change the invocation of methodcopyAndInctocopyAndInc(a, a), and change theassertstatement toassert b.f == 3 && a.f == 2. To verify the resulting code, you’ll have to change the specifications of methodcopyAndInc. - Can you find specifications for method

copyAndIncthat allow verifying both versions of methodclient: the original implementation (copyAndInc(a, b); assert b.f == 3 && a.f == 4) and the new one (copyAndInc(a, a); assert b.f == 3 && a.f == 2). That is, can you find specifications that work, regardless of whether or not the passed references are aliases?

Predicates

So far, we have only discussed the specification of programs with a statically-finite number of field locations; our specifications must enumerate all relevant locations in order to express the necessary permissions. However, realistic programs manipulate data structures of unbounded size. Viper supports two main features for specifying unbounded data structures on the heap: predicates and quantified permissions.

Viper predicates are top level declarations, which give a name to a parameterised assertion; a predicate can have any number of parameters, and its body can be any self-framing Viper assertion using only these parameters as variable names. Predicate definitions can be recursive, allowing them to denote permission to and properties of recursive heap structures such as linked lists and trees. Viper predicates may also be declared with no body (in this case, the predicate is abstract), which can be useful for representing assertions whose definitions should be hidden for information hiding reasons, or in more advanced applications of Viper, for adding new kinds of resource assertion to the verification problem at hand.

Predicates are introduced in top-level declarations of the form

predicate P(...) { A }

or

predicate P(...)

where P is a globally-unique predicate name, followed by a possibly-empty list of parameters, and A is the predicate body, that is, an assertion that may include usages of P as well as P‘s parameters. Viper checks that predicate bodies are self-framing. Declarations of the second form (that is, without body) introduce abstract predicates.

A predicate instance is written P(e1,...,en), and is a second kind of resource assertion in Viper: as for field permissions, predicate instances can be inhaled and exhaled (added to and removed from the program state), and the Viper program state includes how many instances of which predicates are currently held.

In Viper, a predicate instance is not directly equivalent to the corresponding instantiation of the predicate’s body, but these two assertions can be explicitly exchanged for one another. The statement unfold P(...) exchanges a predicate instance for its body; fold P(...) performs the inverse operation. Abstract predicates cannot be folded or unfolded.

In the following example, the predicate tuple represents permission to the left and right fields of some tuple (note that this is not a keyword in Viper). The method requires permission to the fields this.left and this.right. Intuitively speaking, the tuple predicate required by the precondition contains permissions to the fields this.left and this.right. Holding the predicate is not enough to be allowed to access these fields; the corresponding permissions, however, can be obtained by unfolding the predicate. On the last line of the method’s body, these permission are folded back into the tuple predicate that is given back to the caller.

field left: Int

field right: Int

predicate tuple(this: Ref) {

acc(this.left) && acc(this.right)

}

method setTuple(this: Ref, l: Int, r: Int)

requires tuple(this)

ensures tuple(this)

{

unfold tuple(this)

this.left := l

this.right := r

fold tuple(this)

}

Viper supports assertions of the form unfolding P(...) in A, which temporarily unfolds the predicate instance P(...) for (only) the evaluation of the assertion A. It is useful in contexts where statements are not allowed such as within method specifications and other assertions. For instance, in the example above we could add a postcondition unfolding tuple(this) in this.left == l && this.right == r to express that the entries of the tuple are set to l and r, respectively.

An unfold operation exchanges a predicate instance for its body; roughly speaking, the predicate instance is exhaled, and its body inhaled. Such an operation causes a verification failure if the predicate instance is not held. A fold operation exchanges a predicate body for a predicate instance; roughly speaking, the body is exhaled, and the instance inhaled. In both cases, however, in contrast to a standard exhale operation, these exhales do not remove information about the locations whose permissions have been exhaled because these permissions are still held, but perhaps folded (or no longer folded) into a predicate.

- In the previous example code above, comment out the

unfoldstatement on the first line ofsetTuple. What fails, and why? What if you instead duplicate this statement? - Try the same with the

foldstatement on the last line of the method body. What fails now? - Add the postcondition

unfolding tuple(this) in this.left == l && this.right == rto the original specification. What happens if you remove theunfolding tuple(this) inpart, and why? - After the

fold tuple(this)statement, add the following lineunfold tuple(this); assert this.left == l; fold tuple(this). Why does this assertion succeed? What if youexhaleand theninhalethe predicate instance before theunfoldyou have just added?

Formally, recursive predicate definitions are interpreted with respect to their least fixpoint interpretations; informally, this implies the built-in assumption that any given predicate instance has a finite (but potentially unbounded) number of predicate instances folded within it. Note that a predicate instance may represent a statically-unknown set of permissions. Holding a predicate instance in a Viper state can be thought of as indirectly holding all of these permissions (though unfolding the predicate will be necessary to make direct use of them).

Analogously to field permissions, it is possible for a program state to hold fractions of predicate instances (unlike for field permissions, these can also be permission amounts larger than 1): this is denoted by accessibility predicates of the shape acc(P(...), p). The simple syntax P(...) has the same meaning as acc(P(...)), which in turn has the same meaning as acc(P(...), write). Folding or unfolding a fraction of a predicate effectively multiplies all permission amounts used for resources in the predicate body by the corresponding fraction. In the example below, one half of a tuple predicate is given to the method. Unfolding this half of the predicate yields half a permission for each of the fields this.left and this.right; which are sufficient permissions to read the fields.

method addTuple(this: Ref) returns (sum: Int)

requires acc(tuple(this), 1/2)

ensures acc(tuple(this), 1/2)

{

unfold acc(tuple(this), 1/2)

sum := this.left + this.right

fold acc(tuple(this), 1/2)

}

The next example is an extract from an encoding of a singly-linked list implementation. The predicate list represents permission to the elem and next fields of all nodes in a null-terminated list. The append method requires an instance of the list predicate for its this parameter and returns this predicate instance to its caller. The body unfolds the predicate in order to get access to the fields of this and folds it back before terminating.

The statement n := new(elem, next) models object creation: it assigns a fresh reference to n and inhales write permission to n.elem and n.next. Notice that unfold list(this) will exchange the predicate instance for its body, which includes the predicate instance list(this.next) if this.next != null. This is important to understand why (when the first branch of the if-condition is taken) two fold statements are needed: one for list(n) and another for list(this): since this.next (or n) is no longer null, folding list(this) depends on first folding list(n).

field elem: Int

field next: Ref

predicate list(this: Ref) {

acc(this.elem) && acc(this.next) &&

(this.next != null ==> list(this.next))

}

method append(this: Ref, e: Int)

requires list(this)

ensures list(this)

{

unfold list(this)

if (this.next == null) {

var n: Ref

n := new(elem, next)

n.elem := e

n.next := null

this.next := n

fold list(n)

} else {

append(this.next, e)

}

fold list(this)

}

- Remove the precondition of

appendand observe that verification fails because the predicate instance to be unfolded (on the first line of the method body) is not held. - Change the predicate definition to require all list elements to be non-negative; change the definition of

appendto maintain this property. - Write a method that creates a cyclic list and attempt to fold the list predicate. Why does this fail? Hint: consider what does the assertion

acc(n.elem) && acc(n.elem)mean in the context of separating conjunction.

It is often useful to declare predicates with several arguments, such as the following list segment predicate, which is commonly used in separation logic. The predicate’s first argument denotes the start of the list segment, the second argument its end (i.e., the node directly after the segment) and the third argument, a value-typed mathematical sequence, represents the values stored in the segment.

field elem : Int

field next : Ref

predicate lseg(first: Ref, last: Ref, values: Seq[Int])

{

first != last ==>

acc(first.elem) && acc(first.next) &&

0 < |values| &&

first.elem == values[0] &&

lseg(first.next, last, values[1..])

}

method removeFirst(first: Ref, last: Ref, values: Seq[Int])

returns (value: Int, second: Ref)

requires lseg(first, last, values)

requires first != last

ensures lseg(second, last, values[1..])

{

unfold lseg(first, last, values)

value := first.elem

second := first.next

}

- Implement a

prependmethod that adds an element at the front of the list. You can useSeq(x) ++ sto concatenate a sequencesto a singleton sequence containingx(see the section on sequences for details). Note that verifying your method will most-likely depend on a sequence identity such as(Seq(x) ++ s)[1..] == s. Such identities are not always provided automatically by the current sequence support. In case your example doesn’t verify, try adding the appropriate equality in anassertstatement; this should tell the verifier to prove the equality first, and then use it. - Write a client method which takes an

lsegin its precondition, calls your prepend method to prepend42to the front, and then usesremoveFirstto get this value back. Can you assert afterwards that the returned value is42? What if you extend the specification ofremoveFirst?

Functions

Just as predicates can be used to define parameterised (and potentially-recursive) assertions, Viper functions define parameterised and potentially-recursive expressions. A function can have any number of parameters, and always returns a single value; evaluation of a Viper function (just as any other Viper expression) is side-effect free. Function applications may occur both in code and in specifications: anywhere that Viper expressions may occur.

Functions are introduced in top-level declarations of the form:

function f(...): T

requires A

ensures E1

{ E2 }

or

function f(...): T

requires A

ensures E1

where f is a globally-unique function name, followed by a possibly-empty list of parameters, and a result type T. Functions may have an arbitrary number of pre- and postconditions; each precondition (indicated by the requires keyword) consists of an assertion A, whereas each postcondition (indicated by the ensures keyword) consists of an expression E1. That is, preconditions may contain resource assertions such as accessibility predicates, but postconditions must not (this difference from methods reflects the fact that function applications are side-effect-free, and so the pre- and post-states of a function application are the same; one can think of function preconditions as also being implicit additional postconditions). The result of the function is referred to using the keyword result in postconditions.

The following example defines a function listLength that takes a null-terminated simply-linked list and computes its length. As shown in the body of listLength, function applications are written simply as the function name followed by appropriately-typed arguments in parentheses. The precondition of listLength expresses the fact that the function application can only be evaluated when the corresponding list predicate instance is held, while the post-condition expresses the fact that the length of a list is always a non-negative integer.

field elem: Int

field next: Ref

predicate list(this: Ref) {

acc(this.elem) && acc(this.next) &&

(this.next != null ==> list(this.next))

}

function listLength(l:Ref) : Int

requires list(l)

ensures result > 0

{

unfolding list(l) in l.next == null ? 1 : 1 + listLength(l.next)

}

The function body declaration { E2 } (if provided) must contain an expression E2 of (return) type T; it may contain function invocations, including recursive invocations of f itself. Function declarations of the latter form (that is, without a function body) introduce abstract functions, which may be useful for information hiding reasons, or to model functions which need not or cannot be directly implemented, e.g., because they model externally-justified information about the encoded program (such as the behaviour of library code). The following example illustrates this by adding a capacity function, intended to model a capacity suitable for storing the elements of the list in an array-like container.

function capacity(l:Ref): Int

requires list(l)

ensures listLength(l) <= result && result <= 2 * listLength(l)

Note that functions declared in Viper domains (i.e. domain functions) are considered by Viper to be abstract state-independent total functions. As such, they can neither have a body nor be equipped with any pre-/postconditions; see the domains section for more details. In contrast, top-level functions can be state-dependent; the ability for function preconditions to include permissions allows them to depend on not only the values of their parameters, but also on heap locations to which their preconditions require permissions.

Viper checks that the function body and any postconditions are framed by the preconditions; that is, the preconditions must require all permissions that are needed to evaluate the function body and the postconditions. Moreover, Viper verifies that the postconditions can be proven to hold for the result of the function. In order to enable function termination checks, which are not performed by default, users can specify termination measures, as discussed in the chapter on termination. As the checking of a recursive function definition is essentially a proof by induction on the unrolling of the definition, not checking termination can lead to unsound behaviour. The following example yields such an inconsistency by means of a non-terminating function:

function bad() : Int

ensures 0 == 1

{ bad() }

Due to the least fixpoint interpretation of predicates, any recursive function whose recursive calls occur inside an unfolding expression are guaranteed to be terminating, as in the case of the listLength function above. Consequently, predicates, and other common well-founded orders, are standard termination measures provided by Viper.

For non-abstract functions, Viper reasons about function applications in terms of the function bodies. That is, in contrast to methods, it is not always necessary to provide a postcondition in order to convey

information to the caller of a function. Nevertheless, postconditions are useful for abstract functions and in situations where the property expressed in the postcondition does not directly follow from unfolding the function body once but, for instance, requires induction. In the case of the listLength function, the non-negativity of the result is indeed an inductive property, and is not exploitable by Viper unless stated in the postcondition.

For every function application, Viper checks that the function precondition is true in the current state, and then assumes the value of the function application to be equal to the function body (if provided), as well as assuming any postconditions. Fully expanding function bodies cannot work for recursive functions. Instead, functions are by-default expanded only once; additional expansions are triggered when unfolding or folding a predicate that is mentioned in the function’s preconditions. This feature allows one to traverse recursive structures and simultaneously reason about the permissions and values. For example, since predicate list was mentioned in the precondition of function listLength earlier, the body of any function call listLength(l) is unfolded whenever a predicate list(l) is. This is why the following implementation of the capacity function can be successfully verified:

function capacity(l:Ref): Int

requires list(l)

ensures listLength(l) <= result && result <= 2 * listLength(l)

{ unfolding list(l) in l.next == null

? 1

: unfolding list(l.next) in l.next.next == null

? 2

: 3 + capacity(l.next.next) }

The example below is an alternative version of the previously shown list segment example from the predicates section: instead of using a predicate parameter for the abstract representation of the list segment (as a mathematical sequence), a function is introduced that computes the abstraction. This usage of functions to eliminate predicate parameters which are redundant (in the sense that their values can instead be computed given any predicate instance and its other parameters) is common in Viper.

field elem: Int

field next: Ref

predicate lseg(this: Ref, last: Ref) {

this != last ==>

acc(this.elem) && acc(this.next) &&

this.next != null &&

lseg(this.next, last)

}

function values(this: Ref, last: Ref): Seq[Int]

requires lseg(this, last)

{

unfolding lseg(this, last) in

this == last

? Seq[Int]()

: Seq(this.elem) ++ values(this.next, last)

}

method removeFirst(this: Ref, last: Ref) returns (first: Int, rest: Ref)

requires lseg(this, last)

requires this != last

ensures lseg(rest, last)

ensures values(rest, last) == old(values(this, last)[1..])

{

unfold lseg(this, last)

first := this.elem

rest := this.next

}

The values function requires an lseg predicate instance in its precondition to obtain the permissions to traverse the list. Its body uses an unfolding expression to obtain the predicate instance required for the recursive application.

- Use the

valuesfunction to strengthen the postcondition ofremoveFirstby stating thatfirstwas indeed the first element of the segment. - Extend the example by a

containsfunction that checks whether or not a list segment contains a given element. - Extend the example by implementing an

appendmethod that appends an element to a list segment (similar to the one used in the predicate section). Afterwards:- Use

containsto express that the appended element is contained in the resulting segment. - Alternatively, use

valuesto express that: (1) the given an element has been appended and (2) that the values stored in the rest of the list segment have not been changed.

- Use

Quantifiers

Viper’s assertions can include forall and exists quantifiers, with the following syntax:

forall [vars] :: [triggers] A

exists [vars] :: e

Here [vars] is a list of comma-separated declarations of variables which are being quantified over, [triggers] consists of a number of so-called trigger expressions in curly braces (explained next), and A (and e) is a Viper assertion (respectively, boolean expression) potentially including the quantified variables. Unlike existential quantifiers, forall quantifiers in Viper may contain resource assertions; this possibility is explained in the section on quantified permissions.

Trigger expressions take a crucial role in guiding the SMT solver towards a quick solution, by restricting the instantiations of a quantified assertion. In particular, when a forall-quantified assertion is a hypothesis for a proof goal, the triggers inform the SMT solver to instantiate the quantifier only when it encounters expressions (which are not themselves under a quantifier) of forms matching the trigger. Let’s first illustrate with an example:

assume forall i: Int, j: Int :: {f(i), g(j)} f(i) && i < j ==> g(j)

assert ...f(h(5))...g(k(7))... // some proof goal

Here, assuming that the SMT solver encounters both the expressions f(h(5)) and g(k(7)), the body of the quantification will be instantiated with i == h(5) and j == k(7), obtaining f(h(5)) && h(5) < k(7) ==> g(k(7)). If no other pairs of expressions matching the triggers are encountered, no other instantiations of the quantifier will be made.

In general, a forall quantifier can have any number of sets of trigger expressions; these are written one after the other, each enclosed within braces. Multiple such sets prescribe alternative triggering conditions; multiple expressions within a single trigger set prescribe that expressions matching each of the trigger expressions must be encountered before an instantiation may be made.

You can check how triggers affect the verification in the following examples:

- in

restrictive_triggersthe triggers are too restrictive and do not allow the right instantiation of the quantifier; - in

dangerous_triggersthe bad choice of the triggers leads to an infinite loop of instantiations (in this case, each instantiation results in a new expression which matches the trigger): a problem known as matching loop. In this case, the SMT solver times out without providing an answer. - in

good_triggersthe choice of the triggers allows the SMT solver to quickly provide the right answer, preventing the problematic matching loop of the previous example.

function magic(i:Int) : Int

method restrictive_triggers()

{

// Our definition of `magic`, with a very restrictive trigger

assume forall i: Int :: { magic(magic(magic(i))) }

magic(magic(i)) == magic(2 * i) + i

// The following should verify. However, the verification fails

// because the triggers are too restrictive and the quantifier

// can not be intantiated.

assert magic(magic(10)) == magic(20) + 10

}

method dangerous_triggers()

{

// Our definition of `magic`, with a matching loop

assume forall i: Int :: { magic(i) }

magic(magic(i)) == magic(2 * i) + i

// The following should fail, because our definition says nothing

// about this equality. However, if you uncomment the assertion

// the verification will time out and give no answer because of the

// matching loop caused by instantiating the quantifier.

// assert magic(magic(10)) == magic(87987978) + 10

}

method good_triggers()

{

// Our definition of `magic`

assume forall i: Int :: { magic(magic(i)) }

magic(magic(i)) == magic(2 * i) + i

// The following verifies, as expected

assert magic(magic(10)) == magic(20) + 10

// The following fails, as expected, because our definition says

// nothing about this equality. The verification terminates

// quickly because we don't have matching loops; the SMT solver

// quickly exhausts the available quantifier instantiations.

assert magic(magic(10)) == magic(87987978) + 10

}

There are a number of restrictions on what can be used as a set of trigger expressions:

- Each quantified variable must occur at least once in a trigger set.

- Each trigger expression must include at least one quantified variable.

- Each trigger expression must have some additional structure (typically a function application); a quantified variable alone cannot be used as a trigger expression.

- Arithmetic and boolean operators may not occur in trigger expressions.

- Accessibility predicates (the

acckeyword) may not be used in trigger expressions.

Applications of both domain and top-level Viper functions can be used in trigger expressions, as can field dereference expressions (e.g. x.f) and Viper’s built-in sequence and set operators. Note that the types of trigger expressions are not restricted; in particular, there is no requirement that trigger expressions are boolean-typed.

If no triggers are specified, Viper will infer them automatically with a heuristics based on the body of the quantifier. In some unfortunate cases this automatic choice will not be good enough and can lead to either incompletenesses (necessary instantiations which are not made) or matching loops; it is recommended to always specify triggers on Viper quantifiers.

The underlying tools currently have limited support for existential quantifications: the syntax for exists does not allow the specification of triggers (which play a dual role for existential quantifiers, in controlling the potential witnesses/instantiations considered when proving an existentially-quantified formula), so existential quantifications should be used sparingly due to the risk of matching loops. This limitation is planned to be lifted in the near future.

For more details on triggers and the e-matching approach to quantifier instantiation, we recommend the Programming with Triggers paper.

Quantified Permissions

Viper provides two main mechanisms for specifying permission to a (potentially unbounded) number of heap locations: recursive predicates and quantified permissions. While predicates can be a natural choice for modelling entire data structures which are traversed in an orderly top-down fashion, quantified permissions enable point-wise specifications, suitable for modelling heap structures which can be traversed in multiple directions, random-access data structures such as arrays, and unordered data structures such as general graphs.

The basic idea is to allow resource assertions such as acc(e.f) to occur within the body of a forall quantifier. In particular, the e receiver expression can be parameterised by the quantified variable, specifying permission to a set of different heap locations: one for each instantiation of the quantifier.

As a simple example, we can model a “binary graph” (in which each node has at most two outgoing edges) in the heap, in terms of a set of nodes, using the following quantified permission assertion: forall n:Ref :: { n.first }{ n.second } n in nodes ==> acc(n.first) && acc(n.second). Such an assertion provides permission to access the first and second fields of all nodes n (as explained in the previous section on quantifiers, the { n.first }{ n.second } syntax denotes triggers). To usefully model a graph, one would typically also require the nodes set to be closed under the graph edges, so that a traversal is known to stay within these permissions; this is illustrated in the following example:

field first : Ref

field second : Ref

method inc(nodes: Set[Ref], x: Ref)

requires forall n:Ref :: { n.first } n in nodes ==>

acc(n.first) &&

(n.first != null ==> n.first in nodes)

requires forall n:Ref :: { n.second } n in nodes ==>

acc(n.second) &&

(n.second != null ==> n.second in nodes)

requires x in nodes

{

var y : Ref

if(x.second != null) {

y := x.second.first // permissions covered by preconditions

}

}

- Remove the second conjunct from the first precondition. The example should still verify. Now change the field access in the method body to be

x.first.first. The example will no longer verify, unless you restore the original precondition. - Try instead making the first precondition

requires forall n:Ref :: n in nodes ==> acc(n.first) && n.first in nodes. The example should verify. Try adding an assert statement immediately after the assignment:assert y != null. This should verify - the modified precondition implicitly guarantees thatn.firstis always non-null (for anyninnodes), since it provides us with permission to a field ofn.first. - Try restoring the original precondition:

requires forall n:Ref :: n in nodes ==> acc(n.first) && (n.first != null ==> n.first in nodes). Theassertstatement that you added in the previous point should no-longer verify, since there is no-longer any reason thatn.firstis guaranteed to be non-null.

Receiver Expressions and Injectivity

In the above examples, the receiver expressions used to specify permissions (the e expression in acc(e.f)) were always the quantified variable itself. This is not a requirement; for example, in the following code, quantified permissions are used along with a function address in the exhale statement, to exhale permission to multiple field locations:

field val : Int

function address(i:Int) : Ref

// ensures forall j:Int, k:Int :: j != k ==> address(j)!=address(k)

method test()

{

inhale acc(address(3).val, 1/2)

inhale acc(address(2).val, 1/2)

inhale acc(address(1).val, 1/2)

exhale forall i:Int :: 1<=i && i<=3 ==> acc(address(i).val, 1/2)

}

The expression address(i) implicitly defines a mapping between instances i of the quantifier and receiver references address(i). Such receiver expressions cannot be fully-general: Viper imposes the restriction that this mapping must be provably injective: for any two instances of such a quantifier, the verifier must be able to prove that the corresponding receiver expressions are different. As usual, this condition can be proven using any information available at the particular program point. In addition, injectivity is only required for instances of the quantifier for which permission is actually required; in the above example, the restriction amounts to showing that the references address(1), address(2) and address(3) are pairwise unequal. In the following exercises, this is illustrated more thoroughly.

- In the above example, try uncommenting the postcondition (

ensuresline) attached to theaddressfunction declaration. The complaint about injectivity should then be removed, since the function postcondition guarantees injectivity ofaddress(i)as a mapping fromito receivers. - Re-comment out the function postcondition (and check that the error re-occurs). In the example code above, try changing the permission amounts from

1/2to1/1throughout. For example, changeacc(address(1).val,1/2)toacc(address(1).val, 1/1)(or toacc(address(1).val), which has the same meaning. This will remove the complaint about injectivity: the permissions held after the threeinhalestatements are sufficient to guarantee the required inequalities. - A further alternative is to add instead an additional assumption (somewhere before the

exhalestatement):inhale address(1) != address(2) && address(2) != address(3) && address(3) != address(1). Again, this should make the verifier happy; as also shown in the previous point, these inequalities are sufficient for theexhaleto satisfy the injectivity restriction; there is no requirement foraddress(i)to be injective in general.

The injectivity restriction imposed by Viper has the consequence that, when considering permissions required via quantified permissions, one can equivalently think about these per instantiation of the quantified variable, or per corresponding instance of the receiver expression.

Magic Wands

Note: this section introduces a somewhat advanced feature of the Viper language, which users who are just starting-out with Viper may wish to skip over for the moment.

When reasoning with unbounded data structures, it can often be useful to specify properties of partial versions of these data structures. For example, during an iterative traversal of a linked list, one typically needs a specification relating the prefix of the list already visited, to a view of the overall data structure. Directly specifying such prefixes (or more generally, instances of data structures with a “hole”), tends to lead to auxiliary predicate definitions (e.g. an lseg predicate for list segments), which in turn necessitate additional lemmas or ghost methods for converting between multiple views of the same structure.

Viper includes a powerful alternative mechanism, which is often useful for evading these problems: the magic wand connective. A magic wand assertion is written A --* B, where A and B are Viper assertions. Like permissions to field locations and instances of predicates, magic wands are a type of resource, instances of which can be held in a Viper program state; these can be added and removed from the state via inhale and exhale operations, just as for other resources.

A magic wand instance A --* B abstractly represents a resource which, if combined with the resources represented by A, can be exchanged for the resources represented by B. For example, A could represent the permissions to the postfix of a linked-list (where we are now), and B could represent the permissions to the entire list; the magic wand then abstractly represents the leftover permissions to the prefix of the list. In this case, both the postfix A and a magic wand A --* B could be given up, to reobtain B, describing the entire list. This “giving up” step, is called applying the magic wand, and is directed in Viper with an apply statement:

inhale A

inhale A --* B

apply A --* B

assert B // succeeds; would fail before the apply statement

To understand a typical use-case for magic wands more concretely, consider the following iterative code for appending two linked lists:

field next : Ref

field val : Int

method append_iterative(l1 : Ref, l2: Ref)

{

if(l1.next == null) { // easy case

l1.next := l2

} else {

var tmp : Ref := l1.next

while(tmp.next != null)

{

tmp := tmp.next

}

tmp.next := l2

}

}

Using a standard linked-list predicate list, and a function elems to fetch the sequence of elements stored in a linked-list, we can specify the intended behaviour of our method, and add a first-attempt at a loop invariant, as follows:

field next : Ref

field val : Int

predicate list(start : Ref)

{

acc(start.val) && acc(start.next) &&

(start.next != null ==> list(start.next))

}

function elems(start: Ref) : Seq[Int]

requires list(start)

{

unfolding list(start) in (

(start.next == null ? Seq(start.val) :

Seq(start.val) ++ elems(start.next) ))

}

method append_iterative(l1 : Ref, l2: Ref)

requires list(l1) && list(l2) && l2 != null

ensures list(l1)

ensures elems(l1) == old(elems(l1) ++ elems(l2))

{

unfold list(l1)

if(l1.next == null) { // easy case

l1.next := l2; fold list(l1)

} else {

var tmp : Ref := l1.next

var index : Int := 1 // extra variable: useful for specification

while(unfolding list(tmp) in tmp.next != null)

invariant index >= 0

invariant list(tmp)

// what about the prefix of the list?

{

unfold list(tmp)

var prev : Ref := tmp // extra variable: useful for specification

tmp := tmp.next

index := index + 1

}

unfold list(tmp)

tmp.next := l2

fold list(tmp)

// how do we get back to list(l1) ?

}

}

In this version of the code, we’ve added the extra variables index (representing how far through the linked-list the tmp reference is), and prev; both will be convenient for writing a specification later in this section. As commented in the file, the specification is not sufficient to verify the code. The loop invariant tracks permission to the postfix linked-list (referenced by tmp). However, it includes neither permissions nor value information about the prefix of the list between l1 and the tmp reference. Since these permissions are not tracked by the loop invariant, they are effectively leaked during the loop execution; with the loop invariant given there is no way after the loop to obtain permission to the entire list (the predicate instance list(l1)), as required in the postcondition.

- Run the example code above. The check of the postcondition should fail, since the required predicate instance

list(l1)is not held after the loop. - Try changing the loop invariant by adding the additional conjunct

&& elems(tmp) == old(elems(l1))[index..]at the end. Re-run the example - the behaviour should be unchanged. This conjunct expresses that the elements fromtmponwards have not been modified so far. - Instead of this additional conjunct, why can’t we simply write

&& elems(tmp) == old(elems(tmp))? You might like to try making this change, and running the example. Recall thatoldexpressions do not affect the evaluation of local variables; the reason is not to do withtmp‘s value directly. Consider instead the precondition ofelems; which predicate instances were held in the pre-state of the method?

We can now employ magic wands to retain the lost permissions. In order to retain (during execution of the loop) the permissions to the previously-visited nodes in the list, we use a magic wand list(tmp) --* list(l1). This magic wand represents sufficient resources to guarantee that if we give up a list(tmp) predicate along with this wand, we can obtain a list(l1) predicate; conceptually, it represents the permissions to the earlier segment of the list between l1 and tmp.

field next : Ref

field val : Int

predicate list(start : Ref)

{

acc(start.val) && acc(start.next) &&

(start.next != null ==> list(start.next))

}

function elems(start: Ref) : Seq[Int]

requires list(start)

{

unfolding list(start) in (

(start.next == null ? Seq(start.val) :

Seq(start.val) ++ elems(start.next) ))

}

method appendit_wand(l1 : Ref, l2: Ref)

requires list(l1) && list(l2) && l2 != null

ensures list(l1) // && elems(l1) == old(elems(l1) ++ elems(l2))

{

unfold list(l1)

if(l1.next == null) { // easy case

l1.next := l2; fold list(l1)

} else {

var tmp : Ref := l1.next

var index : Int := 1

// package the magic wand required in the loop invariant below

package list(tmp) --* list(l1)

{ // show how to get from list(tmp) to list(l1):

fold list(l1) // also requires acc(l1.val) && acc(l1.next)

}

while(unfolding list(tmp) in tmp.next != null)

invariant index >= 0

invariant list(tmp)// && elems(tmp) == old(elems(l1))[index..]

invariant list(tmp) --* list(l1) // magic wand instance

{

unfold list(tmp)

var prev : Ref := tmp

tmp := tmp.next

index := index + 1

package list(tmp) --* list(l1) // package new magic wand

{ // we get from list(tmp) to list(l1) by ...

fold list(prev)

apply list(prev) --* list(l1)

}

}

unfold list(tmp)

tmp.next := l2

fold list(tmp)

apply list(tmp) --* list(l1) // regain predicate for whole list

}

}

The additional loop invariant includes an instance of a magic wand: list(tmp) --* list(l1). Such a magic wand instance denotes a new kind of resource in Viper (in addition to field permissions and predicate instances); as such, it can be inhaled and exhaled just as other resource assertions. This particular magic wand instance can (when applied), be used up to exchange any list(tmp) predicate instance for a list(l1) predicate instance. In this example, the magic wand notionally represents the permissions to the prefix of the list between l1 and tmp. These magic wand instances are created via package operations, which are explained below.